Someone recently asked me for assistance with a university project whereby they were asked to predict whether a given article was fake news or not. They had a target accuracy of 70%. Since the topic of fake news has been in the news a lot, it made me think about how I would approach this problem and whether it is even possible to use machine learning to identify fake news. At first glance, this problem might be comparable to spam detection, however the problem is actually much more complicated. In an article on The Verge, Dean Pomerleau of Carnegie Mellon University states:

“We actually started out with a more ambitious goal of creating a system that could answer the question ‘Is this fake news, yes or no?’ We quickly realized machine learning just wasn’t up to the task.”

The Definition of Fake News Matters

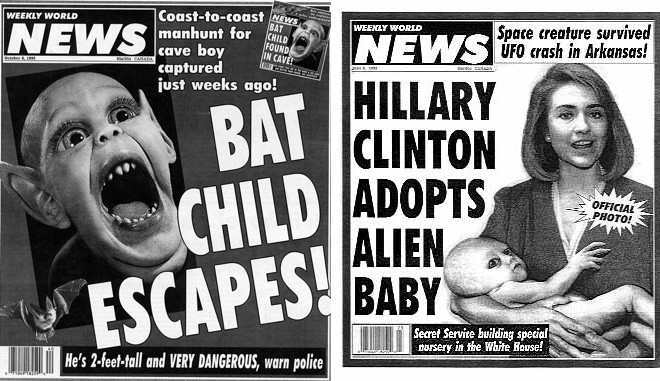

The first issue I’d see right off the bat is the definition of fake news. One could define fake news as articles which misrepresent the facts. But determining what “the facts” are can be quite complicated, even for humans. Would an article that is published but later proven to be incorrect be considered fake news? I would argue no. What about satire or humorous articles? Are they fake news? How about news from state-run propaganda services? What about heavily biased but factually accurate reporting? I could go on, but I think you get the idea.

Bottom line is that while many people would say that fake news are news articles that are false and that something is either true or not, are really oversimplifying things. In fact, I would suspect that many articles which could be considered fake news DO contain some truth.

To me at least, I would define fake news as publications which deliberately mislead the reader. But this poses a problem in that determining this is quite difficult, even for humans. Distinguishing fake news from legitimate news requires a knowledge of the facts which is not something that traditional machine learning can do.

An Example…

Let’s look at a real life example. Recently in the New York Times, there was an article with the headline: Nikki Haley’s View of New York Is Priceless. Her Curtains? $52,701. (https://www.nytimes.com/2018/09/13/us/politics/state-department-curtains.html) The facts of this article were essentially correct. The State Department spend $52k for new curtains for the US Ambassador’s residence in New York. However, the article insinuated that Ambassador Haley was the person responsible for this spending when it fact it was the previous US Ambassador to the UN.

The New York Times has been rightly called out for this piece of poor reporting by CNN, The Washington Post and many other reputable news sources. But here’s the question. How could a machine detect that as being fake news? What characteristics distinguish that article from other legitimate news articles?

If I were designing a system to detect fake news, I might consider things like the reputation of the author and publisher, the style of language, the number of adjectives/adverbs used, etc. But I suspect that this article would be undetectable using this methodology. It was written by a reputable source, well written etc.

Machine Learning Has Limits

What I’m getting at is that current machine learning methods have limitations. People have a hard time distinguishing between real and fake news, so why should we expect machines to do better?

I suspect the answer to this question is hype. Machine Learning and Artificial Intelligence has been so over hyped, that few are trying to determine what the actual limits of the technology. Indeed, many luminaries regularly make absurd statements about AI taking over the world, or worse. I have no doubt that AI and Machine Learning will continue to develop and amaze us with the possibilities, but here and now there’s still a long way to go.